Ongoing since March, 2024

As AI gets better at mastering public knowledge, what becomes increasingly valuable is private knowledge—your notes, your thinking, your accumulated experience—becomes the differentiator, it also becomes the key to personalization. I've been taking daily notes on everything in my life for over three years using a Zettelkasten method. That's over 1 million tokens worth of thinking, connections, and accumulated understanding about me.

What happens when you feed 1 million tokens of your personal notes to an AI and ask it not just to answer questions, but to teach you something new? To find the corners of your own thinking that you're only just becoming aware of yourself?

This is the question that led me, in March of 2024, to create a proactive research assistant—an automated system that reads my entire knowledge base each night and generates personalized research reports on topics I didn't even know I wanted to explore.

More than a year after I began generating daily reports, OpenAI ChatGPT Pulse was released with nearly the same concept. After this I updated my system to generate a cover image for each report using Gemini's nano-banana, here are a few examples of reports:

The System: How It Works

Each night while I sleep, an AI reads my entire second brain.

Well, not quite. But close. The system ingests around 1 million tokens of my Roam Research notes—everything from daily journals to technical explorations, creative ideas to personal goals. Gemini 1.5 was the first model to offer this kind of long context window, this made it possible.

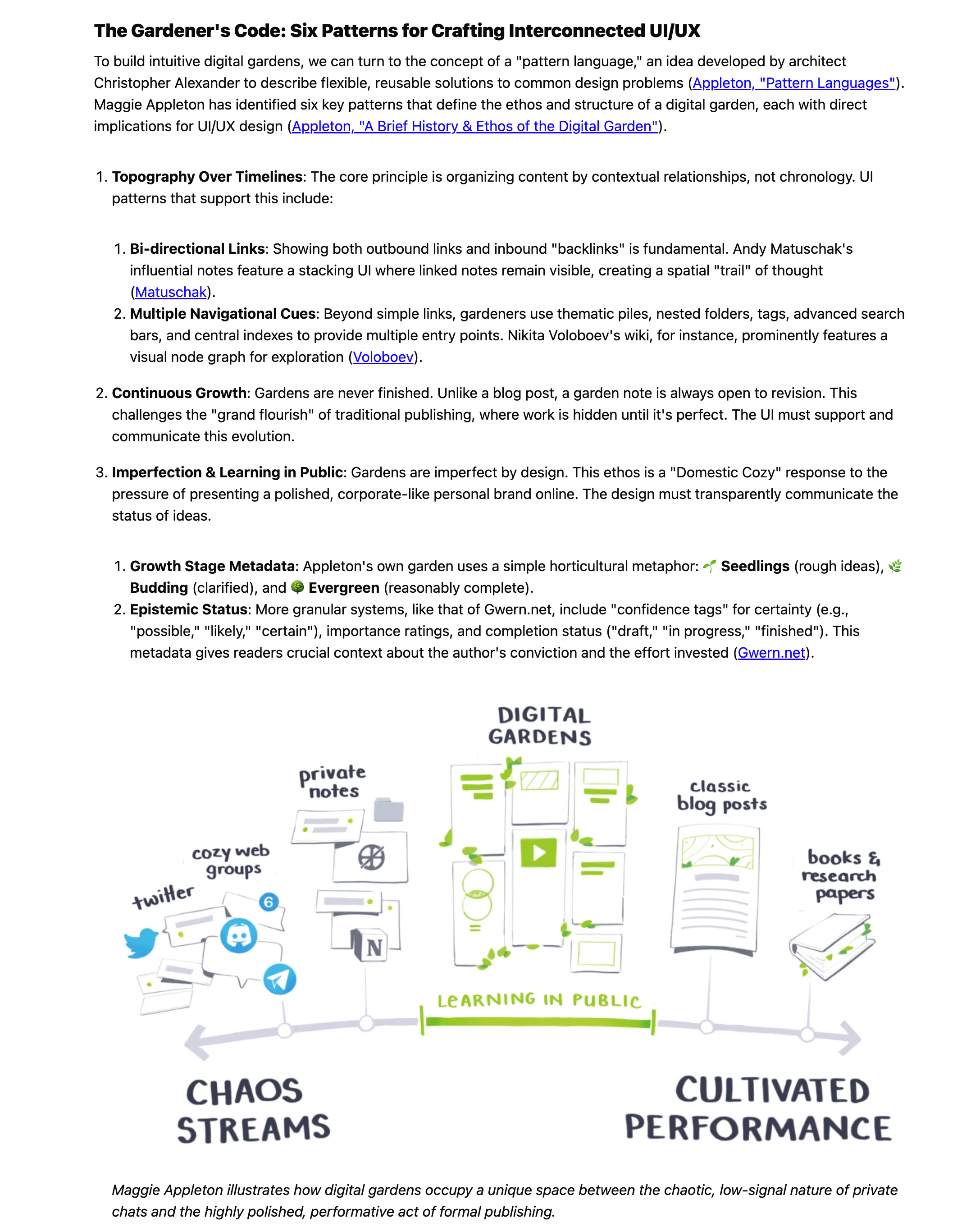

The process is fully automated and agentic:

Stage 1: Topic Discovery

- The system analyzes my recent notes, especially daily entries and weekly summaries

- It looks for patterns, recurring themes, and emerging interests

- It generates 3 main topics that would be compelling to explore further

- For each topic, it identifies 3 sub-topics that go deep—not surface-level tutorials, but substantive knowledge

Stage 2: Research Gathering

- For each sub-topic, it generates specific search queries

- It performs Google Custom Search to find relevant sources

- It scrapes the top results and evaluates their quality

- It decides if the content likely links to interesting content and if so, follows the links, building a web of connected knowledge

- It filters out low-quality content and prioritizes substantive material

- It then decides if another search query should be generated to collect more sources.

Stage 3: Report Generation

- For each sub-topic, it creates an outline based on the scraped content

- It writes a detailed research report synthesizing the findings

- It selects one aspect to expand upon with deeper analysis

- Each report is formatted in markdown, ready to read

Stage 4: Delivery & Feedback Loop

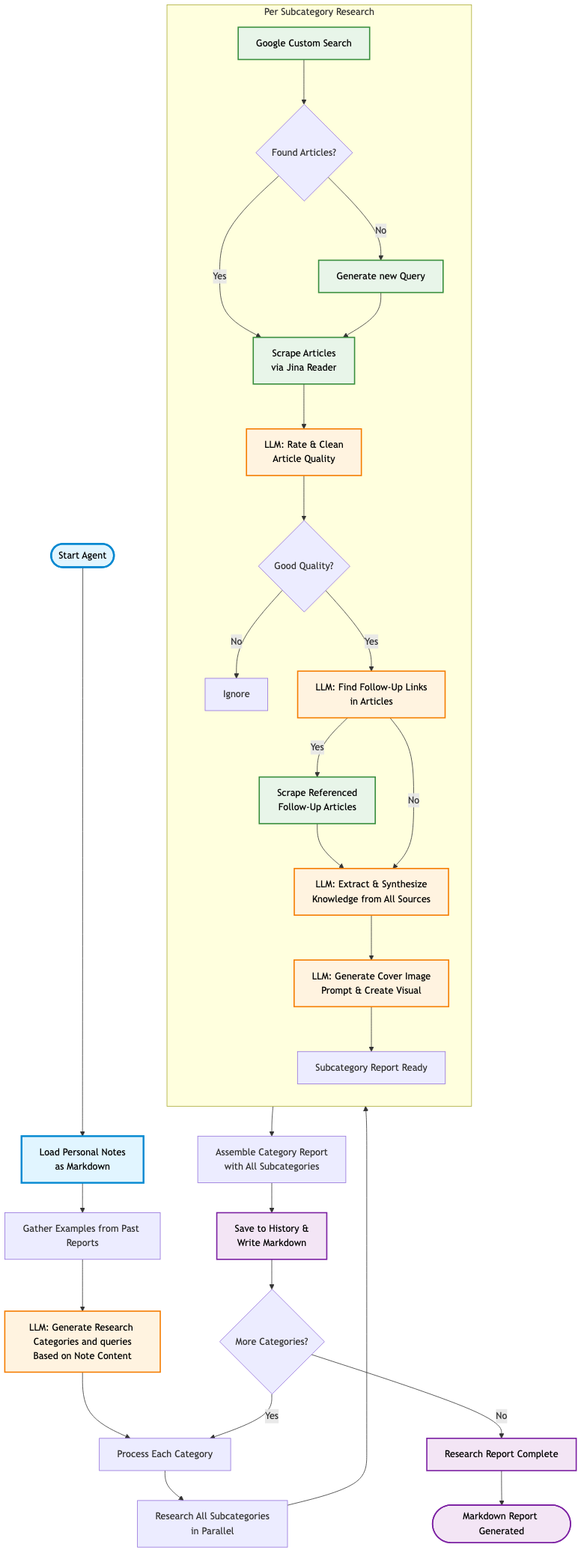

- Each morning I receive 9 new reports in my inbox

- I can mark reports as "favorites" which influences future topic selection

- I can ask follow-up questions about any report using the source material

The inbox interface showing daily research reports

The Experience: Unexpected Discoveries

It has become a source of inspiration in ways I didn't anticipate.

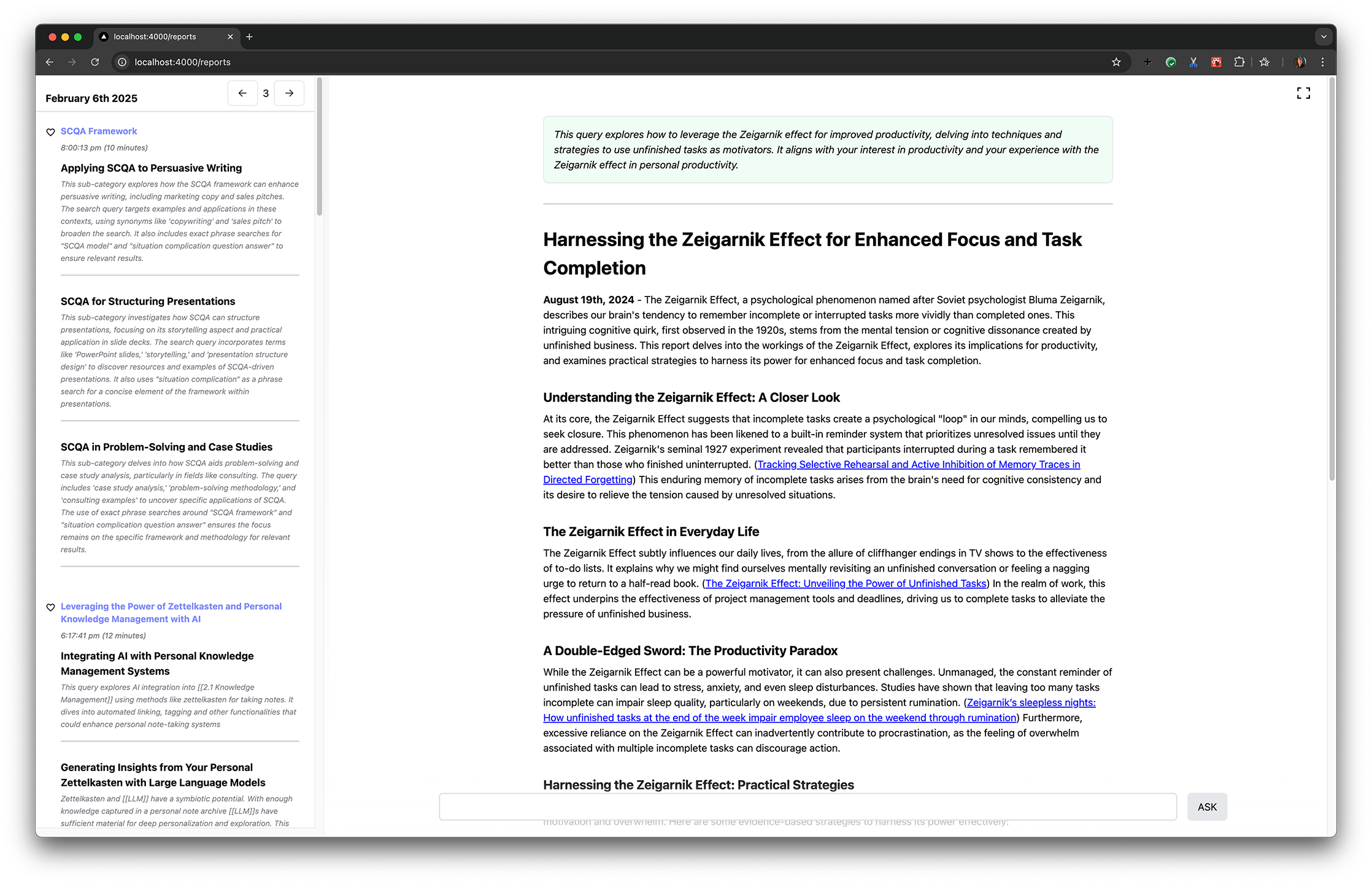

Some mornings I wake up to deep dives on design history:

A research report that introduced me to interesting new designers

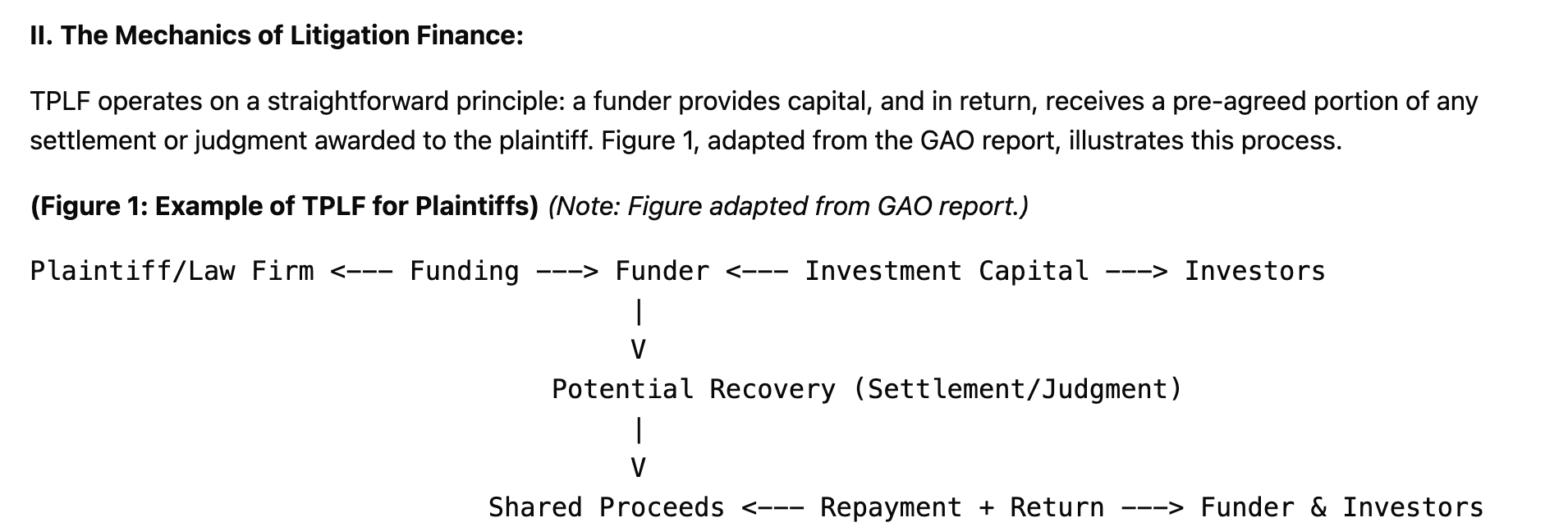

Other days, it introduces me to entirely new domains I would never have thought to explore on my own:

An unexpected report on litigation finance, complete with process diagrams

This happened because the AI noticed connections in my notes—perhaps I'd written about financial systems, or legal processes, or alternative investment strategies. It saw patterns I hadn't consciously connected and said, "You might find this interesting."

This is different from traditional search or even AI chat. I'm not asking questions. I'm being presented with knowledge that's been curated based on everything I've ever written down, but that still surprises me.

The favorites feature provides human-in-the-loop feedback: when I mark a report as particularly valuable, that information is provided to the prompt to inform the topic selection process. The system learns not just what I write about, but what kind of research actually resonates with me.

The Implications: Private Knowledge in the Age of Public AI

This project exists at an interesting intersection: the rise of AI that can master all public knowledge, and the growing value of private knowledge systems.

As LLMs become increasingly capable with publicly available information, the knowledge that derives its value from limited accessibility becomes more important. Your personal knowledge base—your accumulated notes—represents a kind of knowledge that AI systems don't have access to. It's context-dependent, relationship-rich, and full of tacit understanding that resists algorithmic replication.

What makes this research assistant unique is that it operates at the boundary between these two worlds:

- It leverages public knowledge (web search, articles, research papers)

- But it's guided by private knowledge (my notes, my interests, my context)

- And it generates new private knowledge (personalized research reports)

The long context window is crucial here. This isn't about RAG (Retrieval-Augmented Generation) where you selectively pull in relevant chunks. The AI has access to everything. It can see connections across domains, notice patterns across years of notes, understand context that would be lost in fragmented retrieval.

This represents a shift in how we think about AI-assisted knowledge work. It's not about replacing human thinking—it's about creating a partnership where the AI has deep access to your thinking and can help you extend it in directions you hadn't consciously considered.

The Process: How You Could Do This

If you have your own knowledge base and want to experiment with something similar, here's the high-level architecture:

Requirements:

- A substantial personal knowledge base (ideally in markdown or structured format)

- Access to a long-context LLM, such as Gemini or Claude Sonnet 4+

- Google Custom Search API for research queries

- Web scraping capability (such as Crawl4AI or Jina.ai)

- A workflow orchestration system for the agentic process

Core Workflow:

- Export & Aggregate: Combine your notes into a single context

- Topic Extraction: Use a carefully crafted prompt to identify research topics based on recent activity and overall patterns

- Search & Scrape: Generate search queries, fetch results, extract meaningful content

- Quality Filtering: Evaluate sources and prioritize high-quality information. I found the reports quality improved when I would send the scraped contents to a cheaper LLM and ask it to reduce the content to just the relevant information and to provide a rating on the content.

- Report Generation: Create structured, readable research reports

The key is making the prompts specific enough to generate deep, substantive research topics rather than surface-level tutorials. Instead of "pen plotter tutorials," you want "occlusion algorithms for generative art with pen plotters."

This is designed as ongoing research and exploration, not a polished product. If you're interested in building something similar, start small: try feeding your notes to a long-context model and ask it to identify 3 topics worth researching. See what it comes up with. Then iterate from there.

Ongoing Research & Future Directions

This research assistant is one of many workflows I'm exploring at the intersection of LLMs and personal knowledge systems. It's part of a broader investigation into personal AI systems that derive their value from deep understanding of an individual user's context, knowledge, and goals.

Current Status:

- Automated nightly processing of 1M tokens

- Daily generation of 9 research reports

- Web interface for viewing, favoriting, and querying reports

- Feedback loop for topic refinement

Broader Context: This work sits within a larger exploration of:

- Private Knowledge Systems and their increasing value

- Tools for Thought enhanced with AI capabilities

- The role of long context windows in personalized AI

- Agentic processes for knowledge work

The goal isn't to automate thinking—it's to create systems that help you think better. To surface connections you hadn't noticed. To introduce you to ideas adjacent to your interests. To be surprised by your own knowledge, seen through a different lens.

This is what happens when you invite AI to the edge of your mind and see what it finds there.

If you're interested in buiilding something similar, I'd love to hear about your experience.

This is ongoing research. The project is actively evolving as I learn more about what's valuable, what's possible, and where the interesting questions lie.